Welcome to DodoChat.

I started building DodoChat to understand what it's like to work with LLMs beyond basic prompt-and-response. I wanted a space to explore how streaming, tool calls, and document context actually feel in a real application.

Most of my time has been spent on the details that make AI feel less like a black box and more like a tool. This means fast, token-by-token streaming, the ability for the model to interact with files, and maintaining a clear conversation history.

Core Focus

The project is primarily an exploration of three things:

What DodoChat Supports

The current version of the experiment is built to handle more than just text. It integrates directly with external data and the local file system using a suite of custom tools:

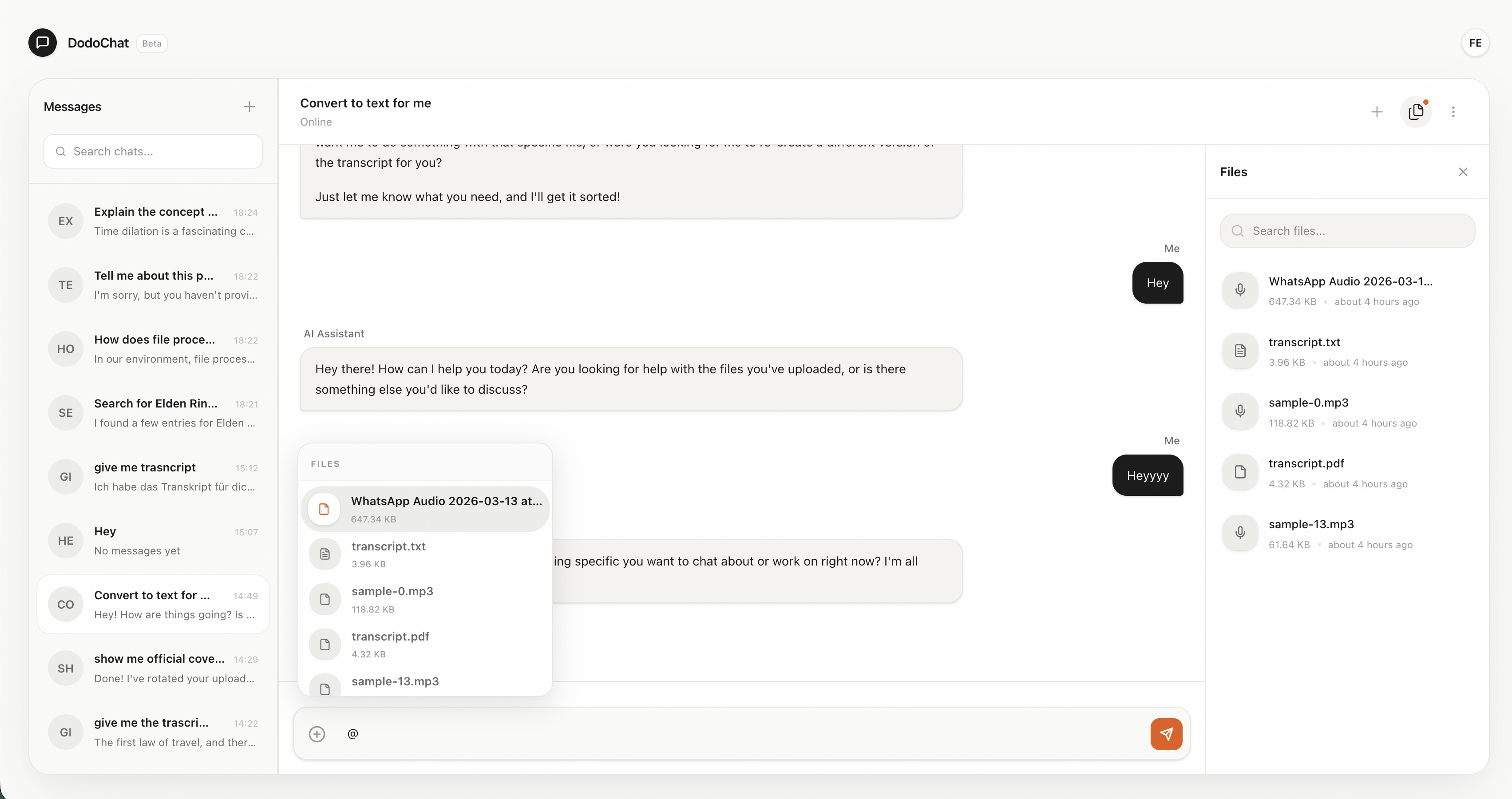

- Document Intelligence & RAGIt uses vector embeddings to index uploaded documents. When you ask a question, it searches for the most relevant chunks of text to provide accurate context to the model.

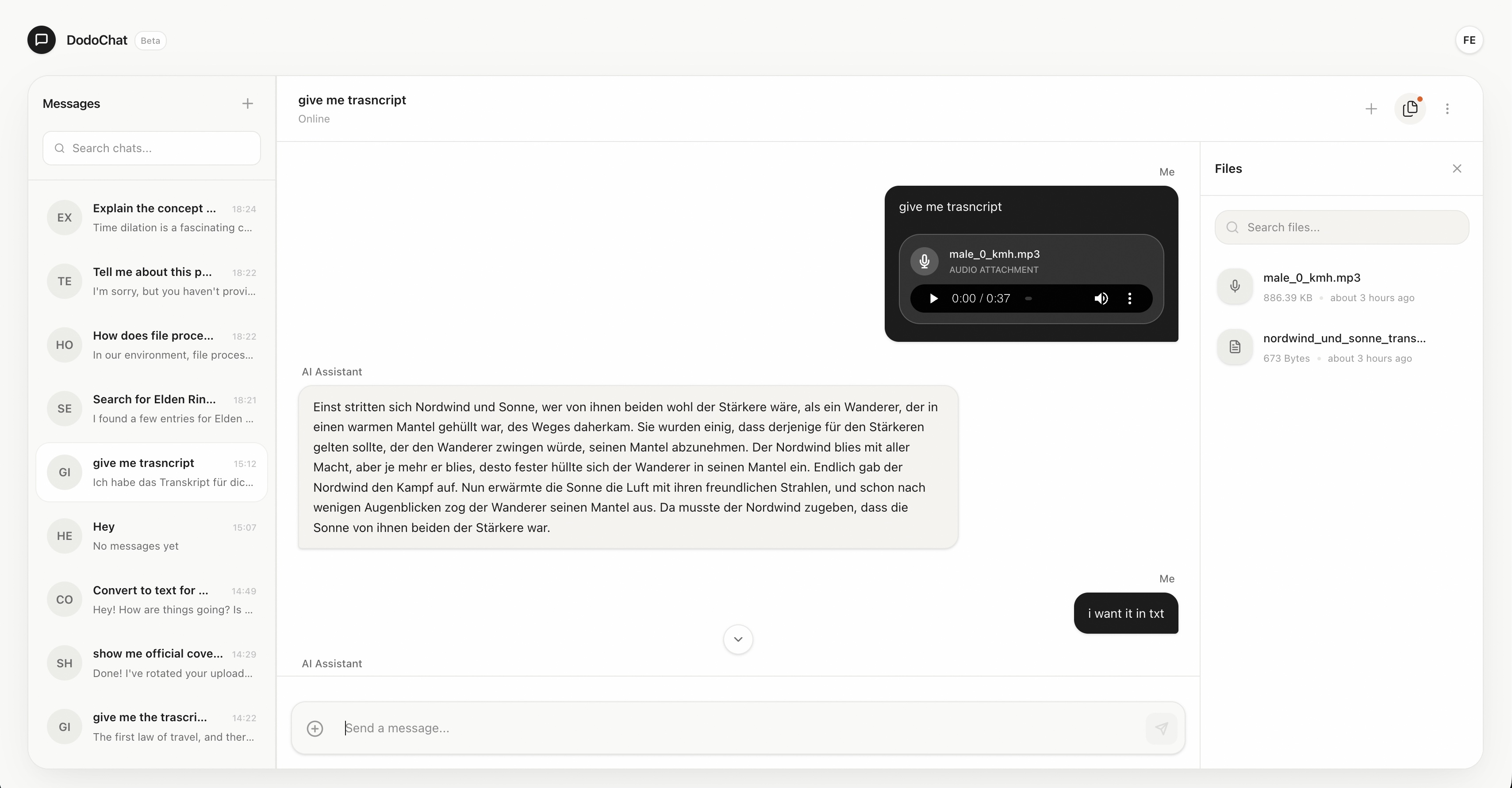

- File GenerationYou can ask the AI to summarize a conversation or transform data into portable formats. It currently supports generating both

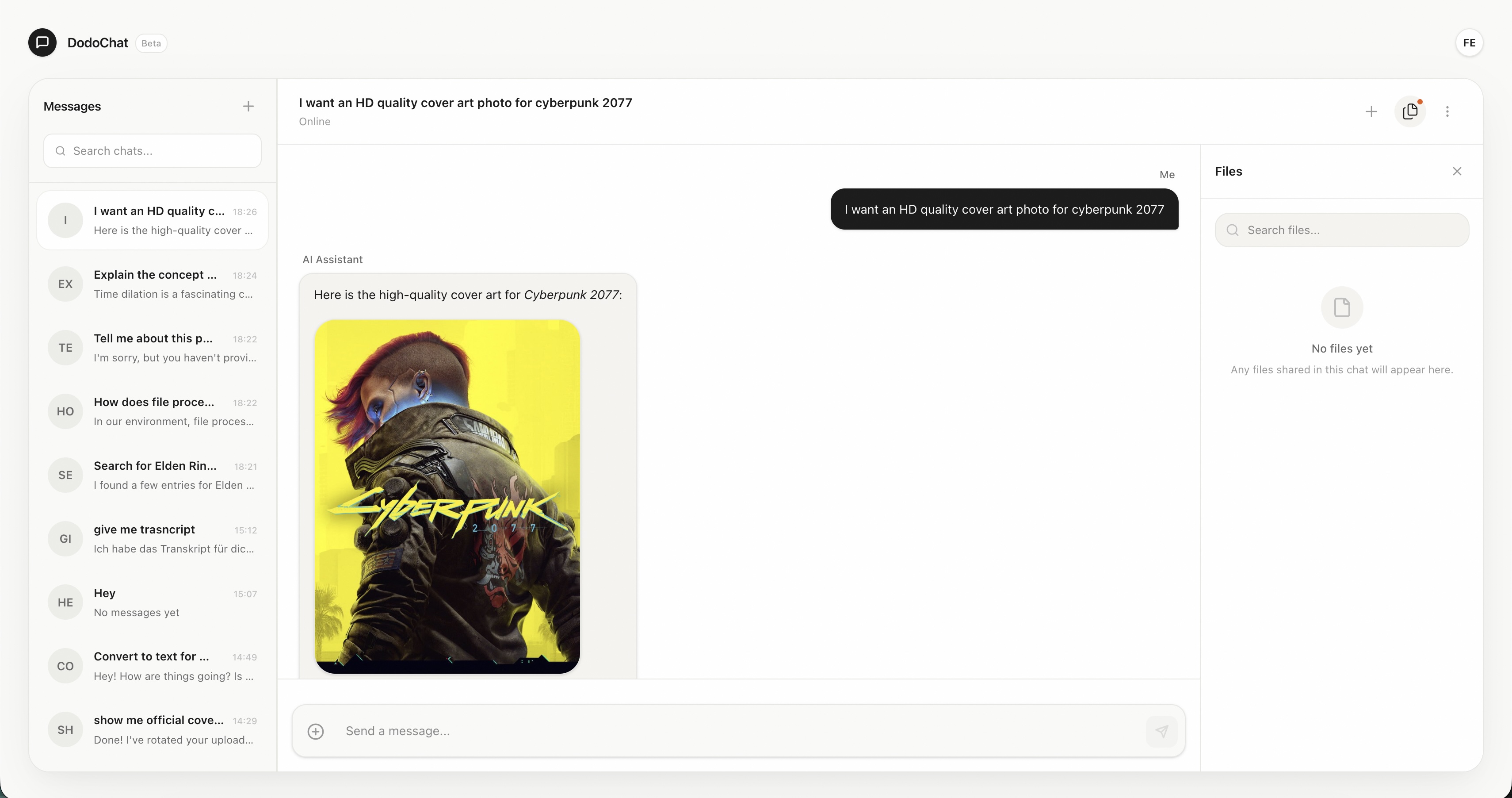

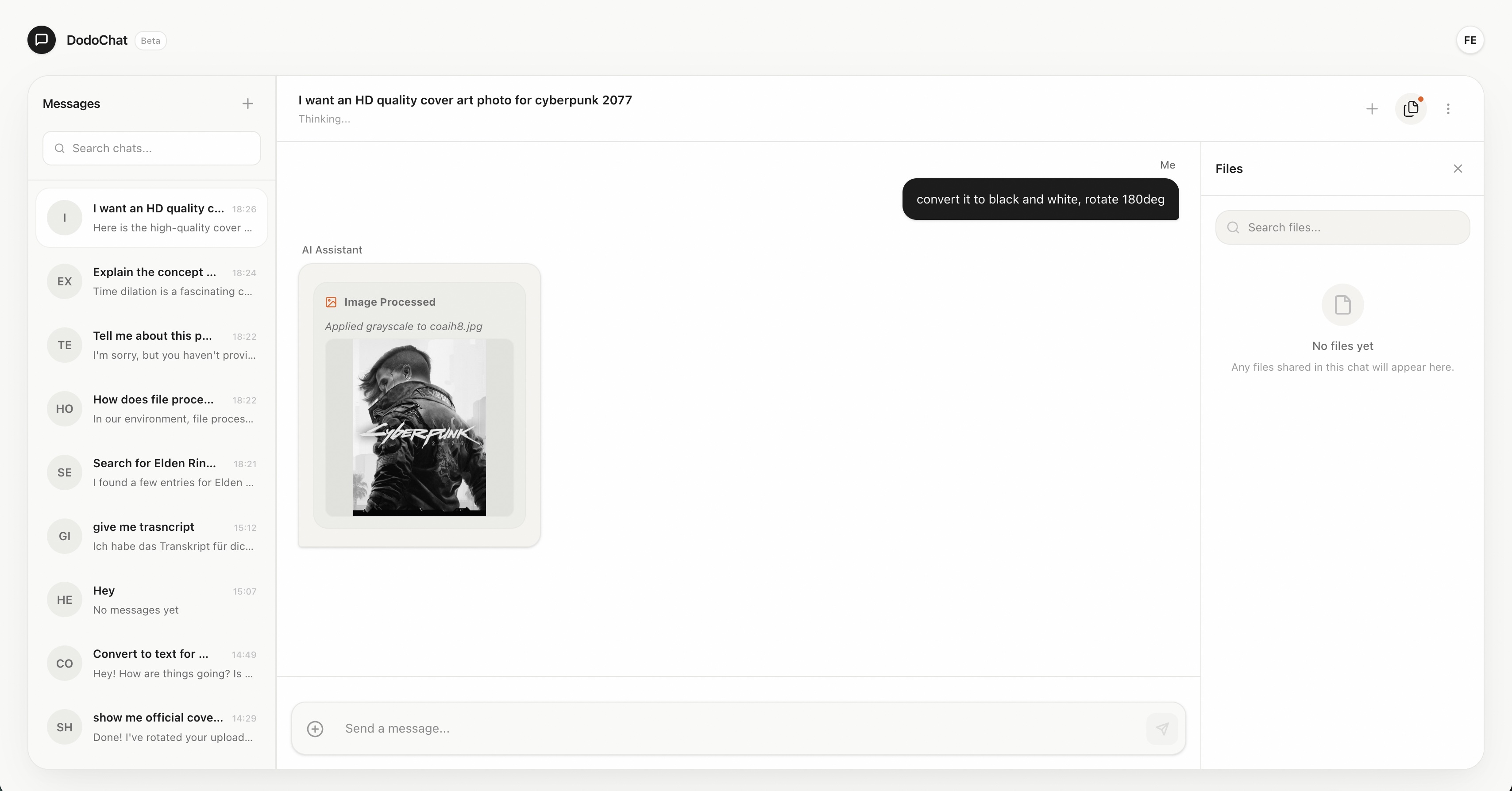

.txtand.pdffiles on the fly. - Image & Media ReasoningThe system handles multi-modal inputs natively. It can "see" uploaded images or process external media (like gaming covers from IGDB) and apply effects using sharp via tool calls.

- Gaming Data & IGDBIntegrated with the IGDB database, the AI can search for game details, fetch high-resolution screenshots, and reason about release dates or platform availability.

The Technical Stack

DodoChat is built with a focus on low latency and developer ergonomics. The architecture is designed to be lean, using modern tools that allow for rapid experimentation:

- Runtime: Built on Bun and Node.js for high-performance server-side execution.

- Intelligence: Powered by Google's Gemini models (including Flash for fast inference and Text-Embedding-001 for RAG).

- Orchestration: Uses the Vercel AI SDK to manage tool calling, streaming, and complex multi-step reasoning.

- Frontend: A React-based single-page application built with Vite and TanStack Router for type-safe, fluid navigation.

Open Source

DodoChat is open source. You can find the full implementation, from the tool-calling logic to the streaming architecture, on GitHub.

DodoChat is an ongoing experiment in AI interaction. It's a space where I explore new patterns for digital assistance, aiming to build tools that feel as responsive and capable as they are intelligent.

Want to try it out?

You can use the current version of the app to chat with Gemini and test the file processing features.